Last updated: 5/5/2023

Status: Scraping behind a login works great with the following 2023 updated guide.

Web Scraping provides anyone with access to massive amounts of data from any website.

As a result, some websites might hide their content and data behind login screens. This practice actually stops most web scrapers as they cannot log in to access the data the user has requested.

However, there is a way to simply get past a login screen and scrape data while using a free web scraper.

Web Scraping Past Login Screens

ParseHub is a free and powerful web scraper that can log in to any site before it starts scraping data.

You can then set it up to extract the specific data you want and download it all to an Excel or JSON file.

To get started, make sure you download and install ParseHub for free.

Before We Start

Before we get scraping, we recommend consulting the terms and conditions of the website you will be scraping. After all, they might be hiding their data behind a login for a reason.

For reference, we recommend you read our guide on the legality of web scraping.

You can also check out our blog post about the ethics of web scraping.

Note, if you want our dedicated team to legally and ethically scrape large amounts of data for you, check out ParseHub Plus.

Next, if you’re scraping a website where account creation is free, we recommend that you create a dummy account for your scraping purposes.

To do this, feel free to use a new email account from a free email provider. For most cases, we recommend creating a dummy Gmail account.

Scraping a Website with a Login Screen

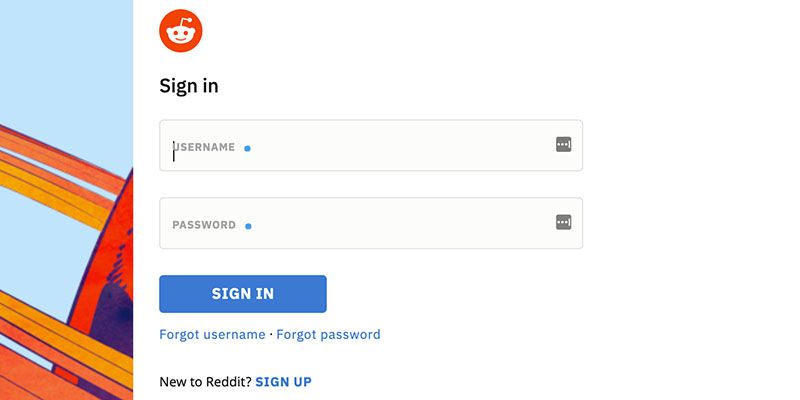

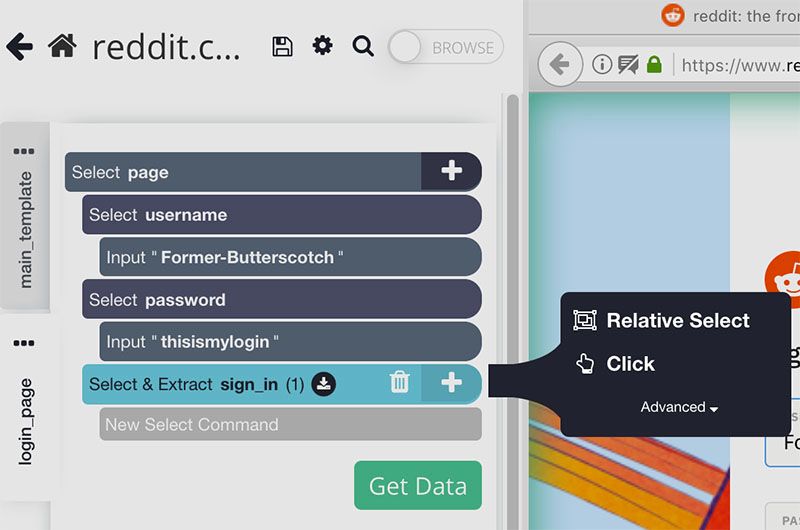

Every login page is different, but for this example, we will setup ParseHub to login past the Reddit login screen. You might be interested in scraping data from a private Subreddit.

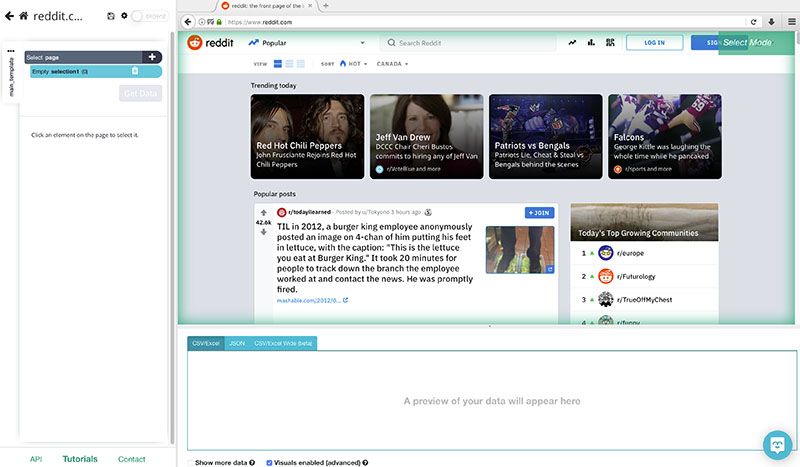

- Open ParseHub and enter the URL of the site you’d like to scrape. ParseHub will now render the page inside the app.

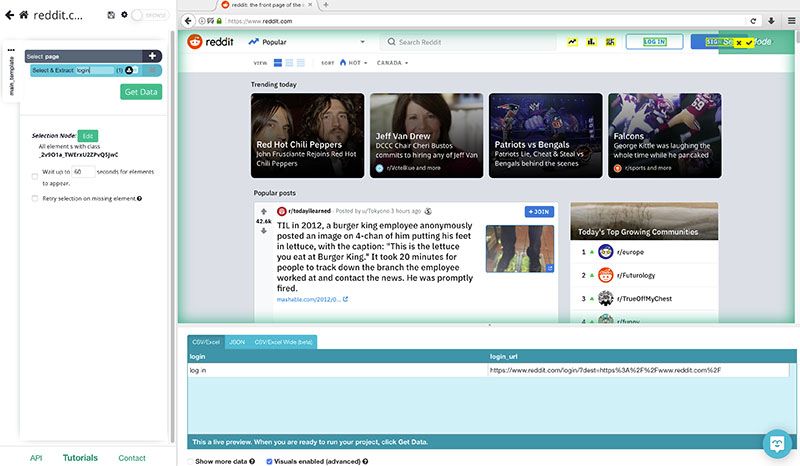

- Start by clicking in the “Log In” button to select it. In the left sidebar, rename your selection to login.

- Click on the PLUS(+) sign next to your login selection and choose the Click command.

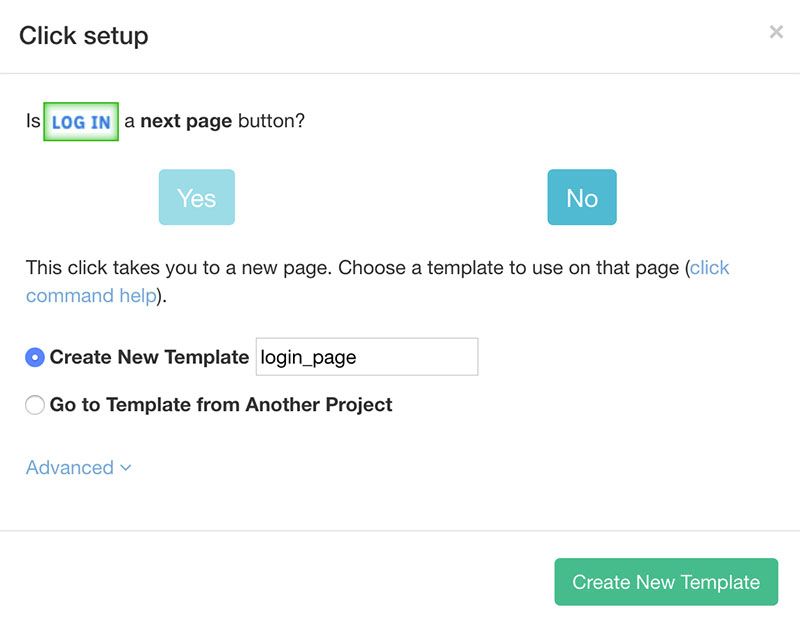

- A pop-up will appear asking you if this is “Next Page” button. Click on “No”, name your template to login_page and click “Create New Template”.

- A new browser tab and new scraping template will open in ParseHub.

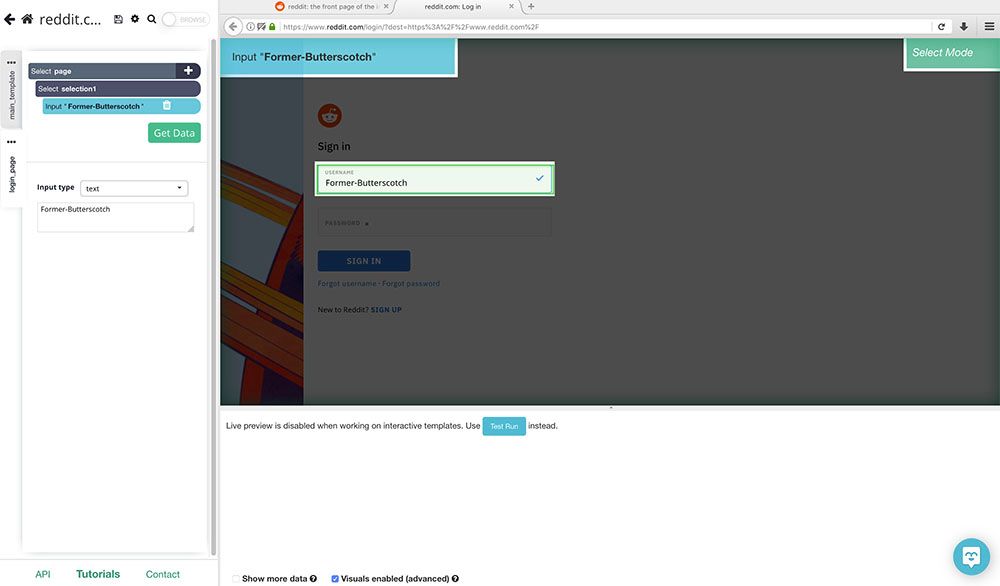

- Start, by clicking on the username field. ParseHub will automatically ask you for the text to enter in this field, enter your account username and rename the selection to username.

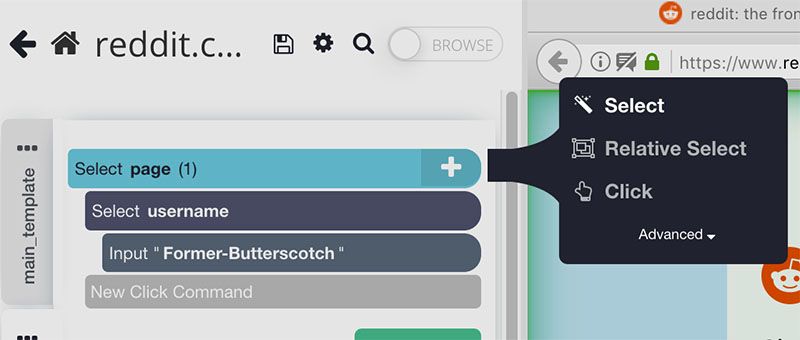

- Click on the PLUS(+) sign next to your page selection and use the Select command.

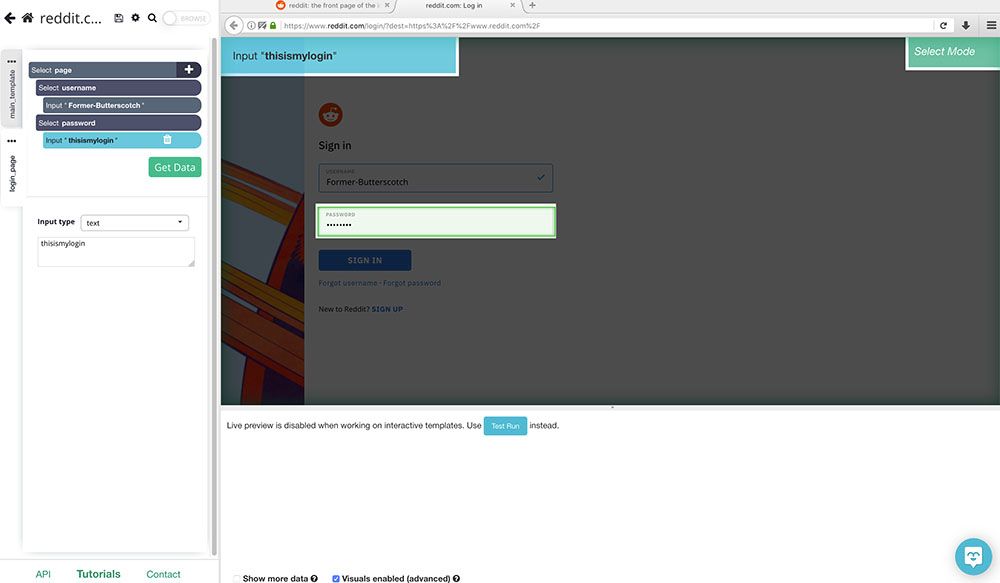

- Now, select the password field and just like in step 6, enter your password details and rename your selection to password.

- Click on the PLUS(+) sign next to your page selection and choose the Select command.

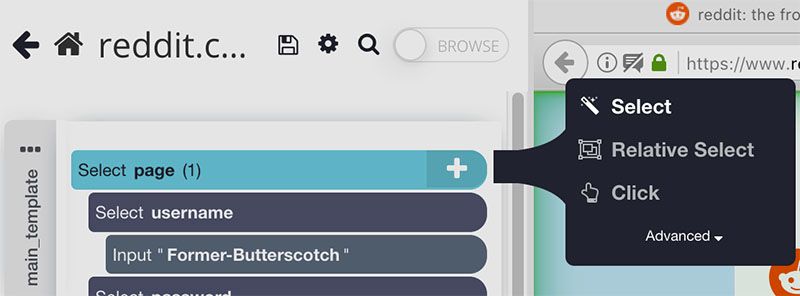

- With the select command, click on the blue “Sign In” button and rename your selection to sign_in.

- Click on the PLUS(+) sign next to your sign_in selection and choose the Click command.

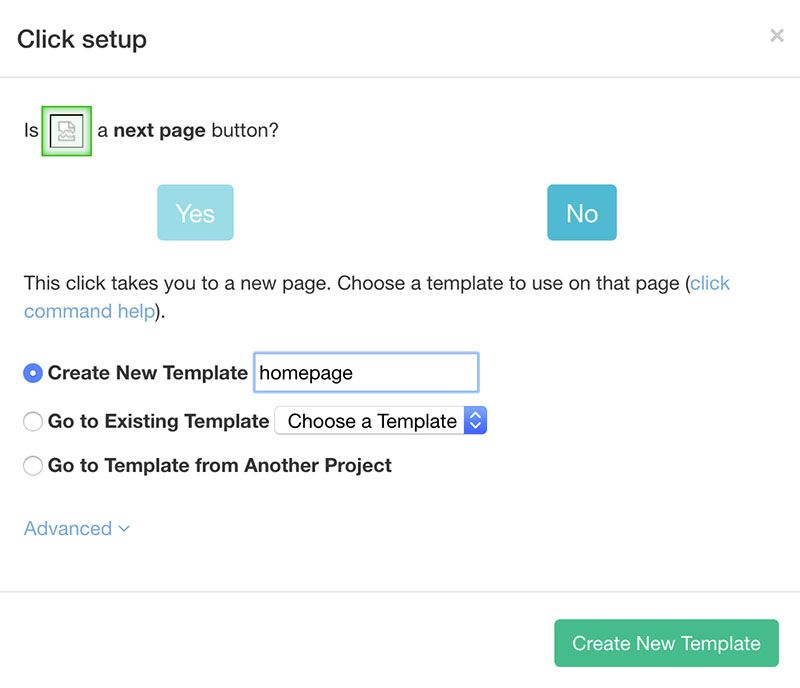

- A pop-up will appear asking you if this is a “Next Page” button. Click on “No” and create a new template. In this case, we will name it homepage.

Closing Thoughts

You now know how to easily get past any login screen while web scraping.

You can now go ahead and create the rest of your scraping project (more on this below).

Although we know that not every website is built the same, if you run into any issues while setting up this project, reach out to us via email or chat and we’ll be happy to assist you with your project.

While you’re at it, want to learn how to scrape data from Reddit? Read our guide on how to scrape Reddit posts and data.

Looking to scrape another website? Here’s how to scrape websites into Excel spreadsheets.

We have many other guides such as web scraping any ecommerce website as well!

Or better yet, why not become a certified web scraping expert? Check out our FREE Web Scraping Certification courses and get certified today!

Happy Scraping!