While I was in university, I was introduced to sneaker collecting by one of my floormates in the dorm I was living in.

I wouldn’t call myself a “sneakerhead”, but I definitely love rocking a fresh pair of kicks.

But one of the worst things about sneaker collecting (as a broke college student) is the prices. $750 for a pair of Levi’s 4s? No thanks.

I started comparing sneaker prices from popular online sneaker retailers because of this large barrier to entry. I tried doing this by maintaining a spreadsheet by hand, but it was way too time-consuming.

How a Web Scraper can help

A web scraper, such as ParseHub, made this process of comparing sneaker prices much easier.

A couple of simple projects and some excel manipulation make finding the best price for a particular colorway of sneaker is a breeze. I’ll show you how to do it using 2 of the most popular online sneaker retailers.

Before we get started, you’ll want to download ParseHub to scrape the sites. ParseHub is powerful and FREE web scraping software - which we will use for this tutorial.

Download ParseHub for free here.

Website 1: FlightClub.com

Flight Club was the first website I was introduced to. It’s a good source for both deadstock and used sneakers. For this example, I’m using the Jordan 3s, since it’s my favorite Jordan sneaker.

The Flight Club website has a navigation bar, which makes it easy to see deals for the sneakers that you are interested in.

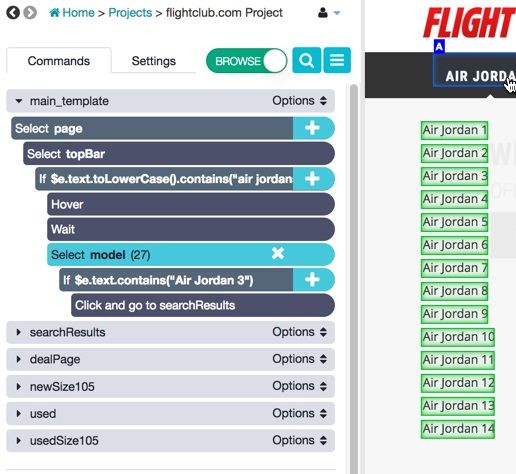

I started out by selecting all of the elements in this bar and nesting a condition under this selection to look for the Jordan category. I did this so that you can easily change this command to look for any category that you are interested in.

After setting it to hover over this element and waiting for the menu to appear, I set another condition to look for the Jordan 3 link and click on it once found.

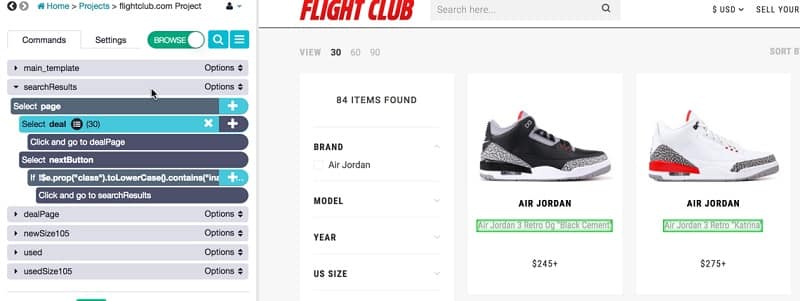

The landing page for listings has a starting price under each sneaker, but I wanted to find more details. So, I set ParseHub to click on each listing page, and then set it to go to the next page once it’s done scraping the listings from this first page.

This webpage has a problem with an infinite loop, so I set a condition looking for an “inactive” class on the next button. If the button is inactive, ParseHub will not click on the next button.

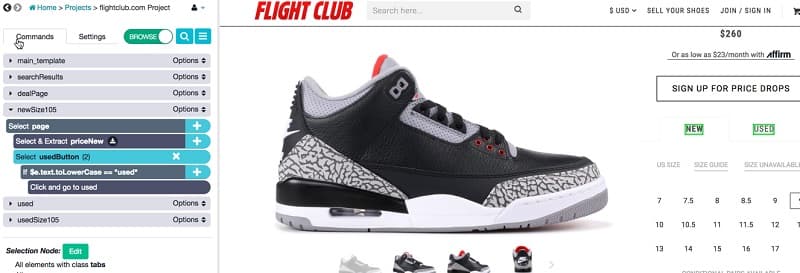

Once on the page of the actual listing, I set ParseHub to select and extract the colorway of the sneaker and then trained it onto the sizes section.

I set it to click on my size using a condition (10.5) and then scrape the resulting price of a new pair. I followed the same process for a used pair as well.

Website 2: StadiumGoods.com

I find that Stadium Goods has a wider selection of merchandise, so it’s usually my go-to website for looking up sneaker prices. Again, I’m just going to search for Jordan 3s, but you can find pretty much any sneaker you want on their website.

The majority of my set up for this project was the same as my Flight Club project, but there were a few differences.

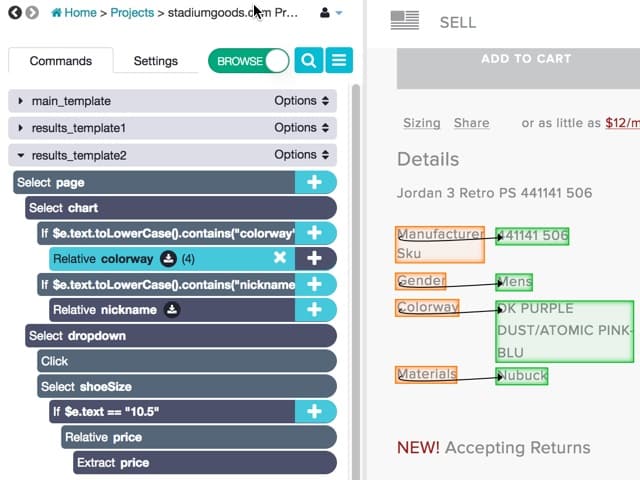

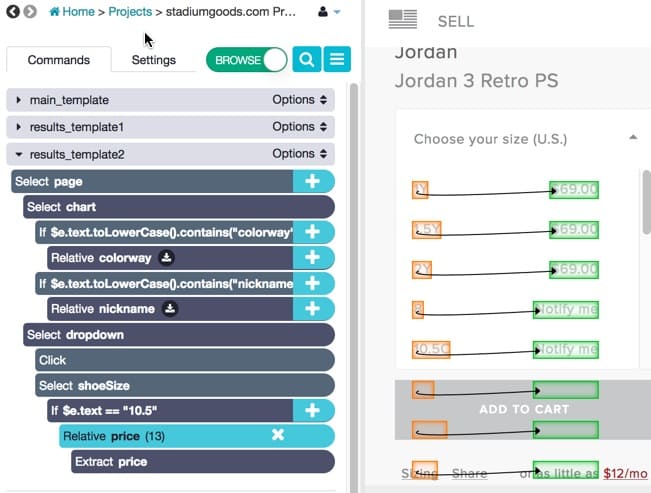

The listing pages have both colorway and nickname fields on them. Since I know most of these colorways by their nicknames, I set ParseHub to extract both the nicknames and colorways (just in case a colorway didn’t have a nickname).

In addition, the sizes on Stadium Goods are in a dropdown menu. I set ParseHub to select and click on the dropdown menu, then did the same search for my size that I did on Flight Club.

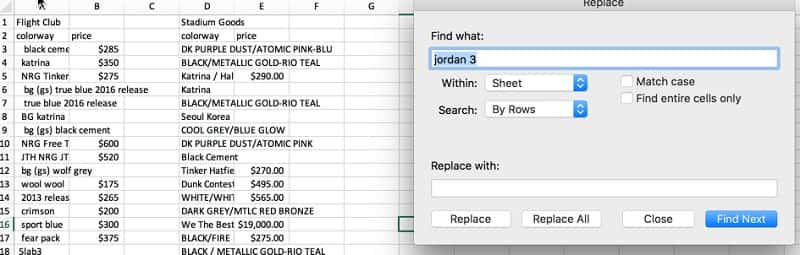

Now that all of the data is gathered, it’s time for some Excel. First, I transferred both data sets to a clean Excel spreadsheet. The colorways from Flight Club were a little messy, so I did some simple find + replace to isolate the colorways.

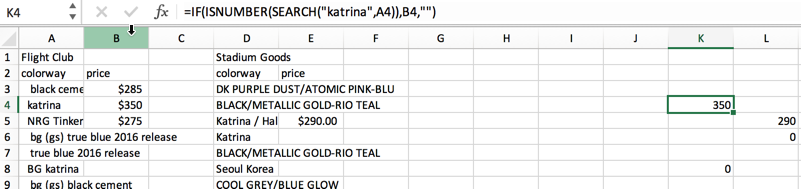

I then set up a simple formula to look for certain colorway (Katrina, in this example). If found, it will return the price of the shoe.

After that, it’s just a matter of looking up the listing page that you are interested in. You can also set ParseHub to extract the URLs of the listing pages to make finding them even easier! This project should be easy to recreate using this article, but if you have any questions about your own project, feel free to contact us at hello[at]parsehub[dot]com.

[This post was originally written on June 25, 2018 and updated on August 1, 2019]