Extracting data from the internet can be incredibly useful.

It can help with your research, APIs, Applications, Databases and more.

In many cases, you might be interested in scraping this data into a Google Sheet spreadsheet to allow for easier access and sharing.

Today, we will use a free web scraper to extract data from a website to Google Sheets.

Web Scraping data into Google Sheets

For this example, we will use ParseHub, a free and powerful web scraper that can extract data from any website.

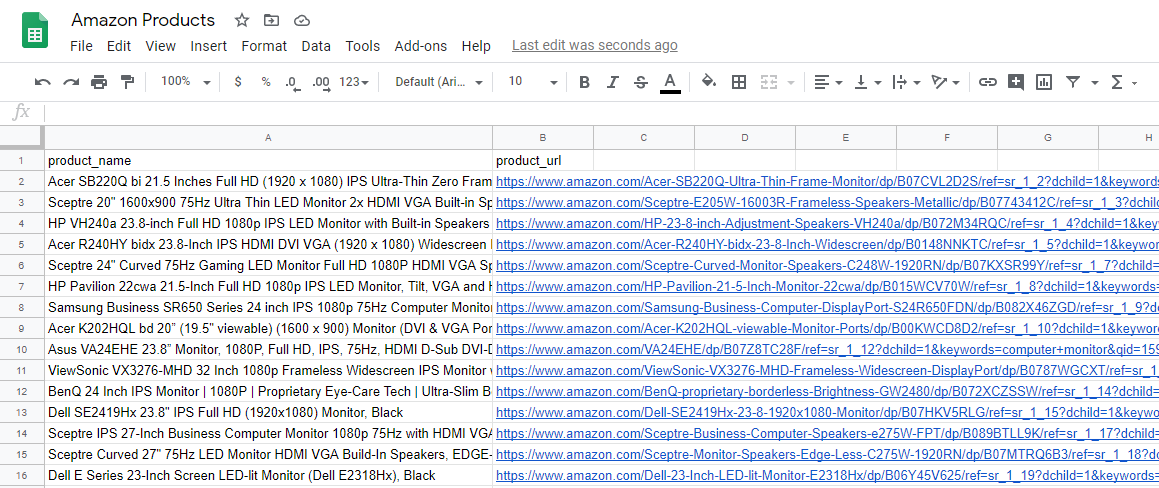

We will also extract data from Amazon’s results page for the term “computer monitor”. We will then extract this data on to a Google Sheets spreadsheet that will be automatically updated.

Make sure to download ParseHub for free before getting started.

Setting Up Your Project

Now, let’s get scraping.

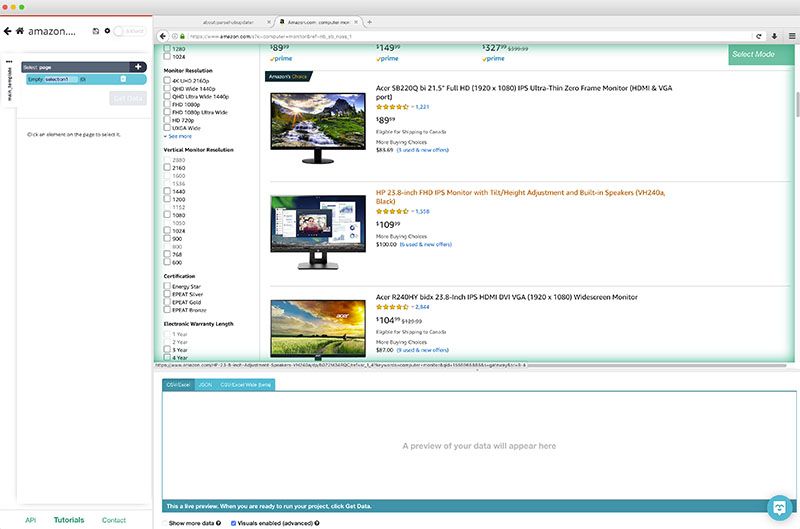

- Open ParseHub and click on “New Project” and enter the URL you will be scraping. Once again, for this example, we will be scraping data from Amazon’s results page for the term “computer monitor”. The page will now render inside the app.

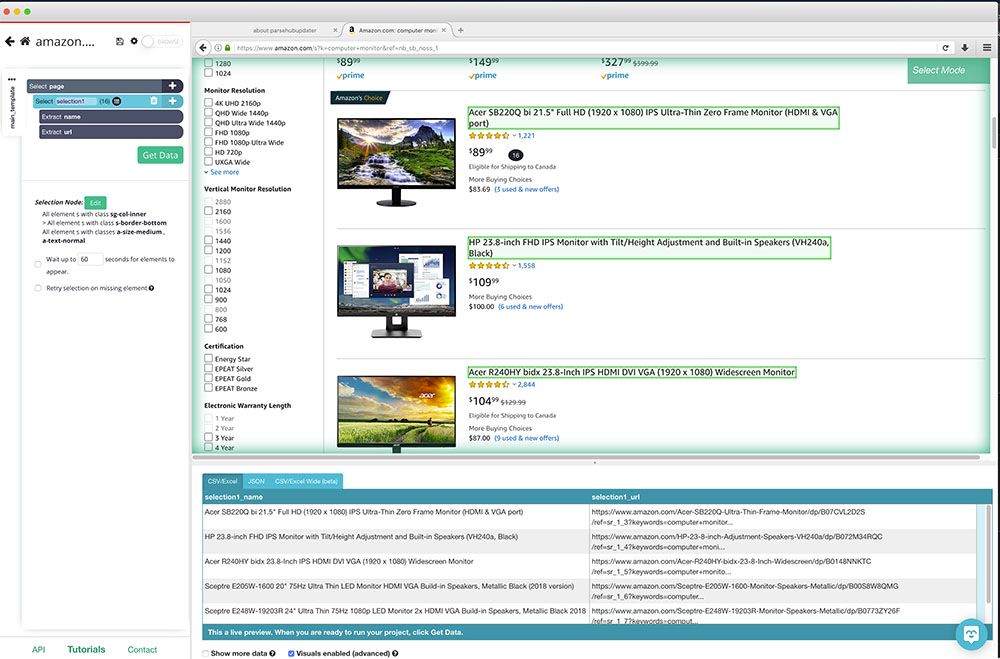

- Once the page is rendered, a select command will be created by default. Start by clicking on the first product name on the page. It will be highlighted in green to indicate that it has been selected.

- Click on the second product name on the list to select them all. All product names will now be highlighted in Green. On the left sidebar, rename your selection to “product”.

You will notice that ParseHub is now pulling each products’ name and URL.

For now, we will keep our scraping project quite simple. However, if you want to scrape more data from Amazon, such as product pricing and details, check our guide on how to scrape Amazon Product data.

Scheduling Future Scrapes

You can now extract data from ParseHub to Google Sheets. However, you might want to schedule your scrape to pull data onto Google Sheets on a schedule.

If you just want to do a one-time scrape, skip to the next section.

Note: Project Scheduling is a paid ParseHub Feature.

To schedule your scraping project, follow these steps:

- Click on the green Get Data button on the left sidebar.

- Click on the “Schedule” button.

- From the dropdown, you can select how often you’d like to run your scrape and at what time.

- Once you’ve set your schedule, click on Save and Schedule.

- Now your project will run automatically at your scheduled times. A new tab for this schedule will be created on the "Get Data" page. You can click on this tab to open a page where your data can be downloaded, after the first time it is scheduled to run. Your data will be available in Excel and JSON format.

Next, we will go over how to automatically extract your results to Google Sheets.

Exporting Data Directly to Google Sheets

ParseHub allows you to extract your scrape results directly on to Google Sheets via its API keys.

Here’s how to set it up:

- Go to the setting page of your project.

- Find your API key by clicking on the "profile" icon in the top-right corner of the toolbar. Click "Account" and you will see your API key listed.

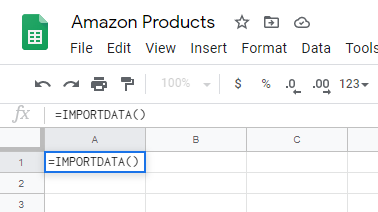

- Open a new Google Sheet spreadsheet.

- On cell A1, type in =IMPORTDATA()

- Between the parenthesis, enter the following URL:

https://www.parsehub.com/api/v2/projects/PROJECT_TOKEN/last_ready_run/data?api_key=API_KEY&format=csv - In the URL above, replace PROJECT_TOKEN with the actual project token from the "Settings" tab of your project.

- Replace the API_KEY with the API key from your account.

Once you’ve finished up your formula, your data will be auto-populated once you run your scrape at least once. To do this, use the green Get Data button on the left sidebar and click on “Run”.

Closing Thoughts

You now know how to automatically extract data from any website to Google Sheets.

If you run into any issues while setting up your project, reach out to us via the live chat on our site and we will be happy to assist you.

Happy Scraping!