Websites are full of valuable information. One of them being the URLs themselves.

For example, you might want to scrape a list of product pages URLs, a list of direct links to important files or a list of URLs for real estate listings. You might even want to scrape a list of vacations or hotels, which can be done by scraping Expedia!

In this update guide, we will show you how to use a free web scraper to scrape a list of URLs from any website, in 2023. You can then download this list as a CSV or JSON file, and even connect your application to the data via ParseHub's API.

A Free and Powerful Web Scraper

In this guide, we will be using ParseHub. A free and powerful web scraper that can extract data from any website. Make sure to download and install ParseHub for free before we get started.

For this example, we will scrape product URLs from Amazon for the keyword “laptop”.

Scraping a List of URLs

Now it’s time to get started. Remember to download ParseHub before we get started.

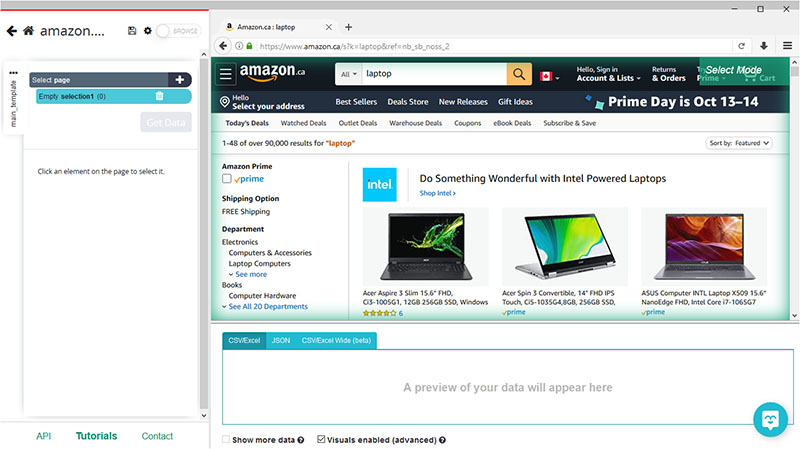

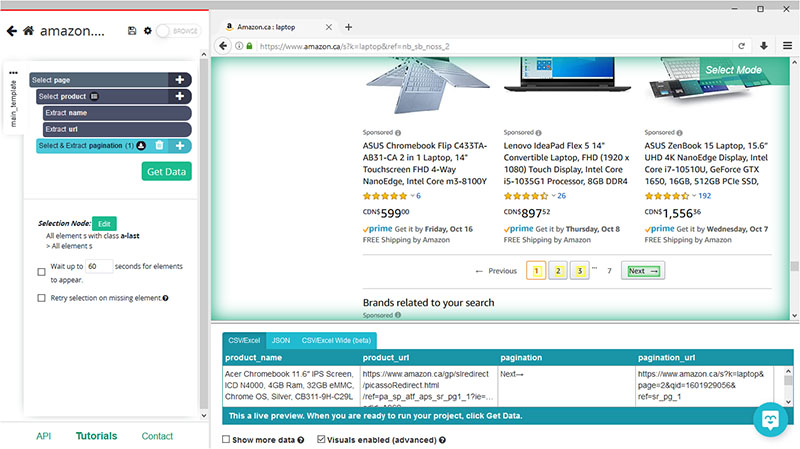

- Install and open ParseHub. Click on New Project and enter the URL you will be scraping. In this case, we will be scraping product URLs from Amazon’s search results page for the term “Laptop”. The page will now render inside the app.

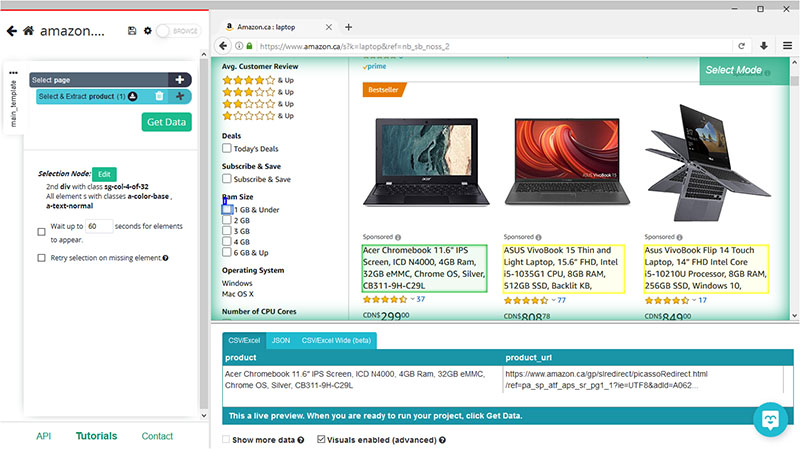

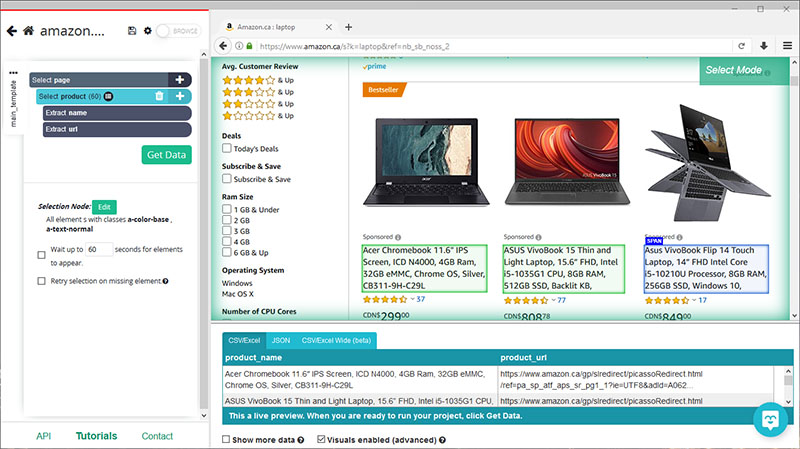

- A select command will be created by default. Start by clicking on the name of the first product on the page. It will be highlighted in green to indicate that it’s been selected, the rest of the listings will be highlighted in yellow. On the left sidebar, rename your selection to “product”.

- Now click on the second product on the list to select them all (You might have to click on more products if ParseHub does not select them all automatically). You will notice that ParseHub is now pulling the name and URL for each product on the page.

If you want to scrape more data from Amazon, read our guide on how to scrape Amazon product data including prices, details and more.

Adding Pagination

Now, let’s instruct ParseHub to navigate to further pages of results and extract more product names and URLs.

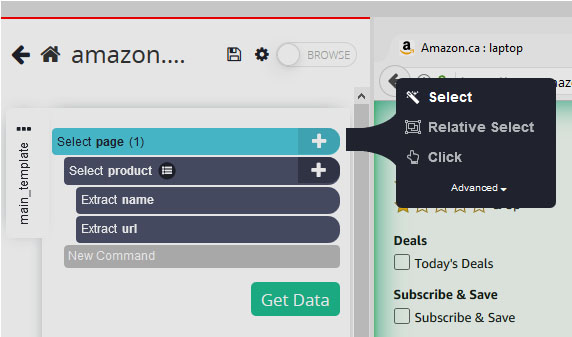

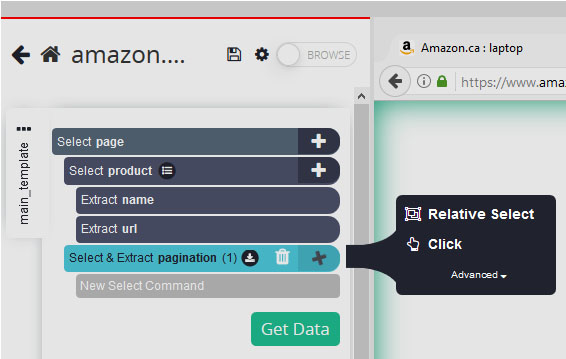

- Click on the PLUS(+) sign next to your “page” selection and choose the “select” command.

- Scroll all the way to the bottom of the page and click on the “next page” button to select it. On the left sidebar, rename your selection to “pagination”.

- Click on the PLUS(+) sign next to the “pagination” selection and choose the “click” command.

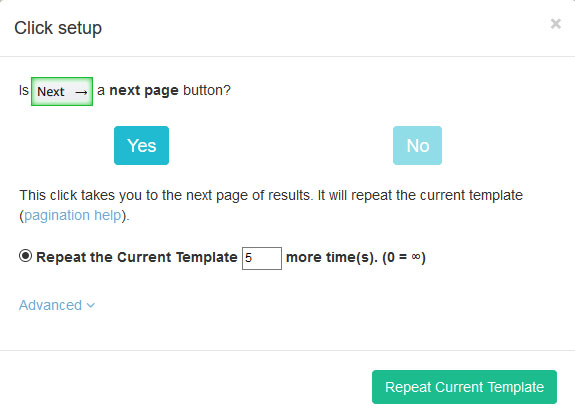

- A pop-up will appear asking you if this a “next page” link. Click on “yes” and enter the number of times you’d like ParseHub to click on the button. For this example, we will do it 5 more times.

Running your Scrape and Extracting URLs

It’s now time to run your scrape and extract the data you’ve selected as CSV or JSON file.

Start by clicking on the green “Get Data” button on the left sidebar.

Here you will be able to Test, Schedule or Run your scrape job. In this case, we will run it right away.

Closing Thoughts

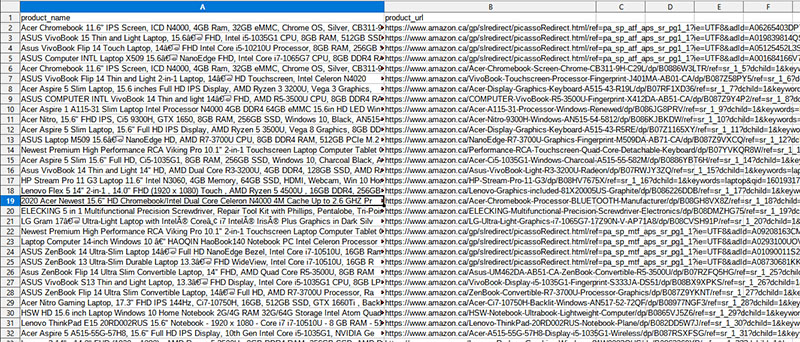

ParseHub is now off to scrape the data you’ve selected from Amazon’s website.

Once the scrape job is completed, you will be able to download your data as a CSV, JSON file or directly connect it to your app as discussed with ParseHub's API.

If you need help with scraping a list of URLs from any website, feel free to contact our live chat support.

![[2023 Method] How to Scrape a List of URLs from Any Website](/blog/content/images/size/w2000/2020/10/scrape-urls-from-website.jpg)