There are several ways of keeping track of the cryptocurrency

From finance and crypto websites to modern investing apps.

However, you might want to access information on cryptocurrency in a format that is more convenient than a website and that gives you more details than an investing app. After all, the more information you have about the cryptocurrency you are investing in, the better investment decisions you can make.

In this case, we will go over the process of pulling information from a cryptocurrency website like CoinGecko into an excel spreadsheet.

CoinGecko and Web Scraping

Using a web scraper, you will be able to choose a specific set of cryptocurrencies from CoinGecko and extract the exact information you’d need from each coin. For this example, we will extract crypto data from coingecko

To complete this task, we will use a free web scraper, ParseHub

Getting Started

- Make sure to download and open ParseHub. Then, click on New Project.

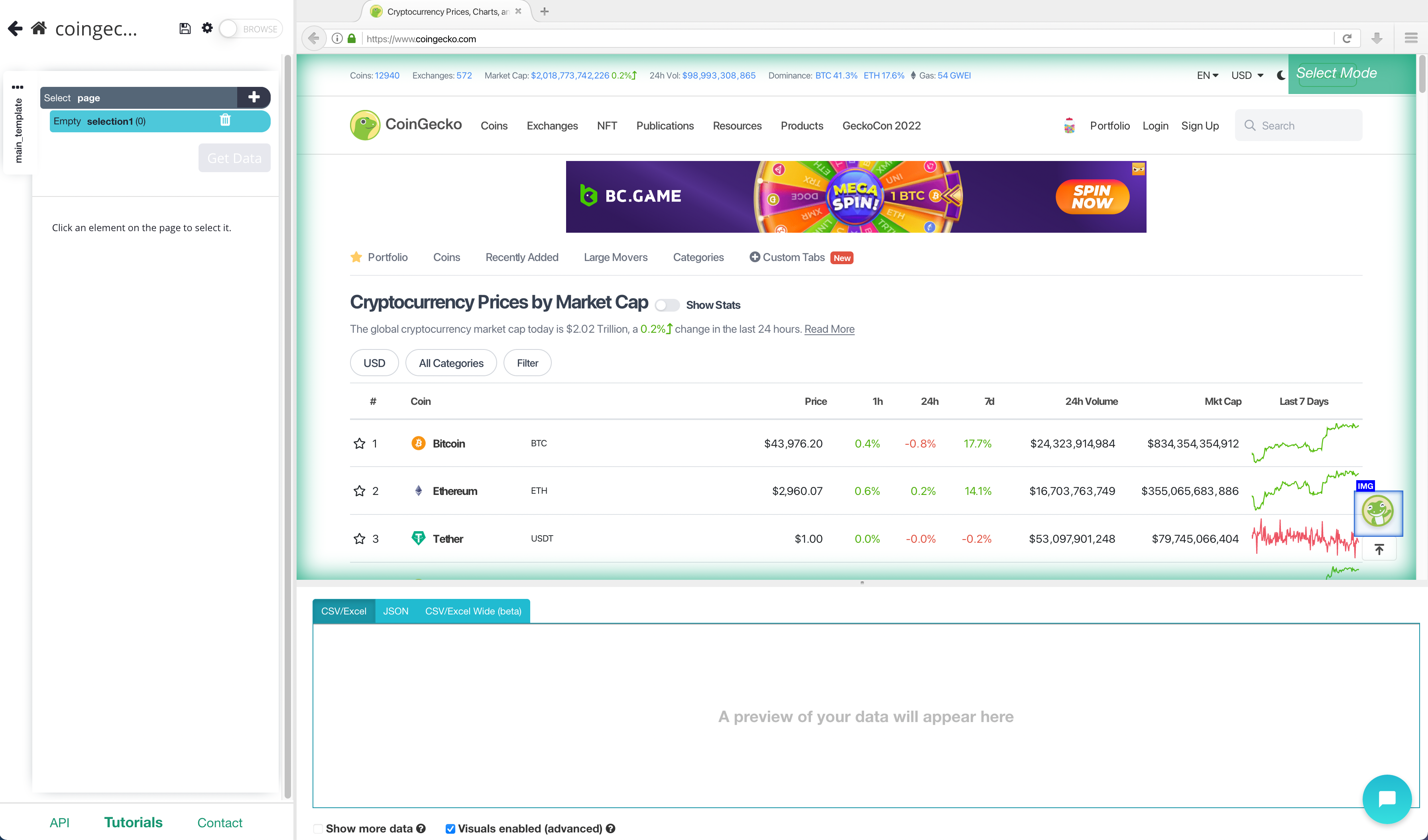

- Once you’ve created your new project, submit the URL you’d like to scrape. In this case, we have selected the CoinGecko page that keeps track of cryptocurrency prices.

Scraping CoinGecko

- Once you submit the URL for your project, ParseHub will render the webpage. You will now be able to select the first element you’d like to extract.

- Scroll down to the list of cryptocurrencies and click on the first coin on the list. In this case, it’s Bitcoin. It will be highlighted in green to indicate that it has been selected.

- On the left sidebar, rename the selection to coin. ParseHub is now pulling the Coin and details URL for this coin.

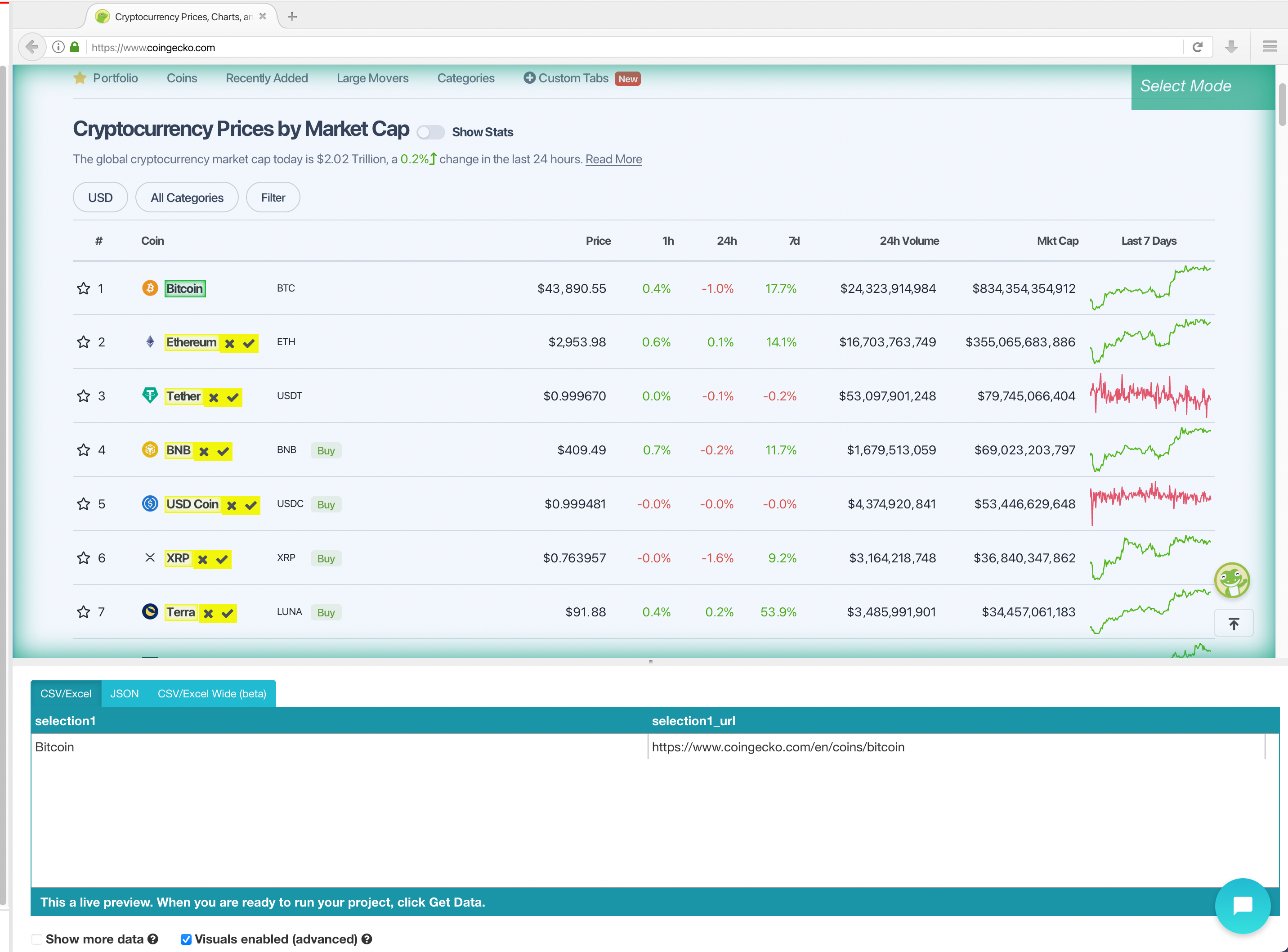

- Now, we will select the rest of the cryptocurrencies in the list which are highlighted in yellow. Click on the second coin on the list to select them all. They will all now be highlighted in green.

- We will now ask ParseHub to also pull the symbol. To do this, click on the PLUS(+) sign next to your coin selection and choose the Relative Select command.

- Using the Relative Select command, click on the first coin on the list and then on the symbol next to it. An arrow will appear to show the association. Then rename your selection to symbol.

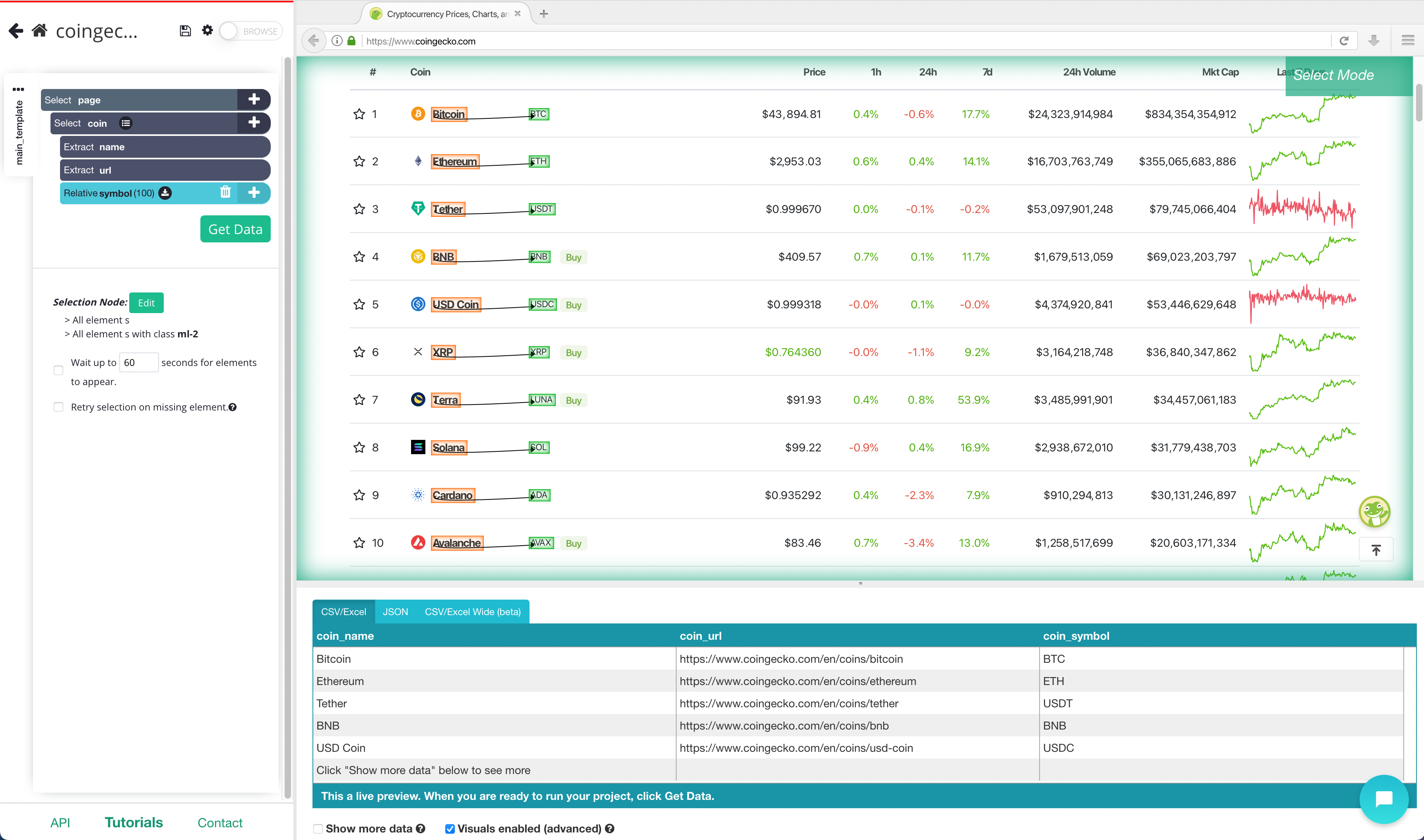

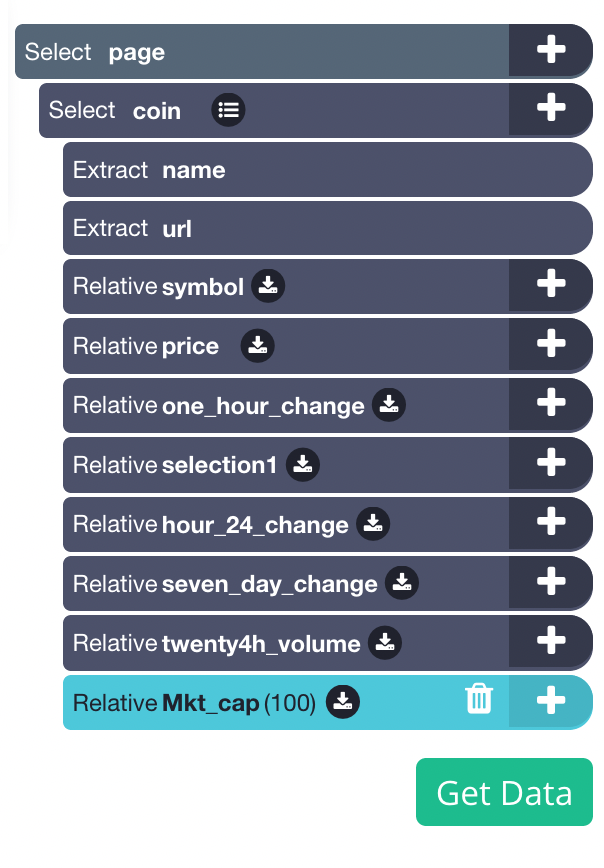

- Repeat steps 5-6 to select the rest of the data fields, including Price,1hr 24h and 7 d change, 24 h volume and market Cap. Rename all your selections accordingly. Your project should now look like this:

Adding pagination

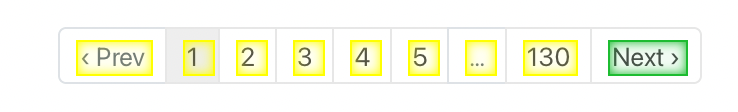

Now, you might want to scrape several pages worth of data for this project. So far, we are only scraping page 1 of the Crypto Data. Let’s set up ParseHub to navigate to the next 5 results pages.

- Click on the PLUS(+) sign next to the page selection and choose the Select command. Then select the Next page link at the bottom of the page. Rename the selection to next

- By default, ParseHub will extract the text and URL from this link, so expand your new selection and remove these 2 extract commands

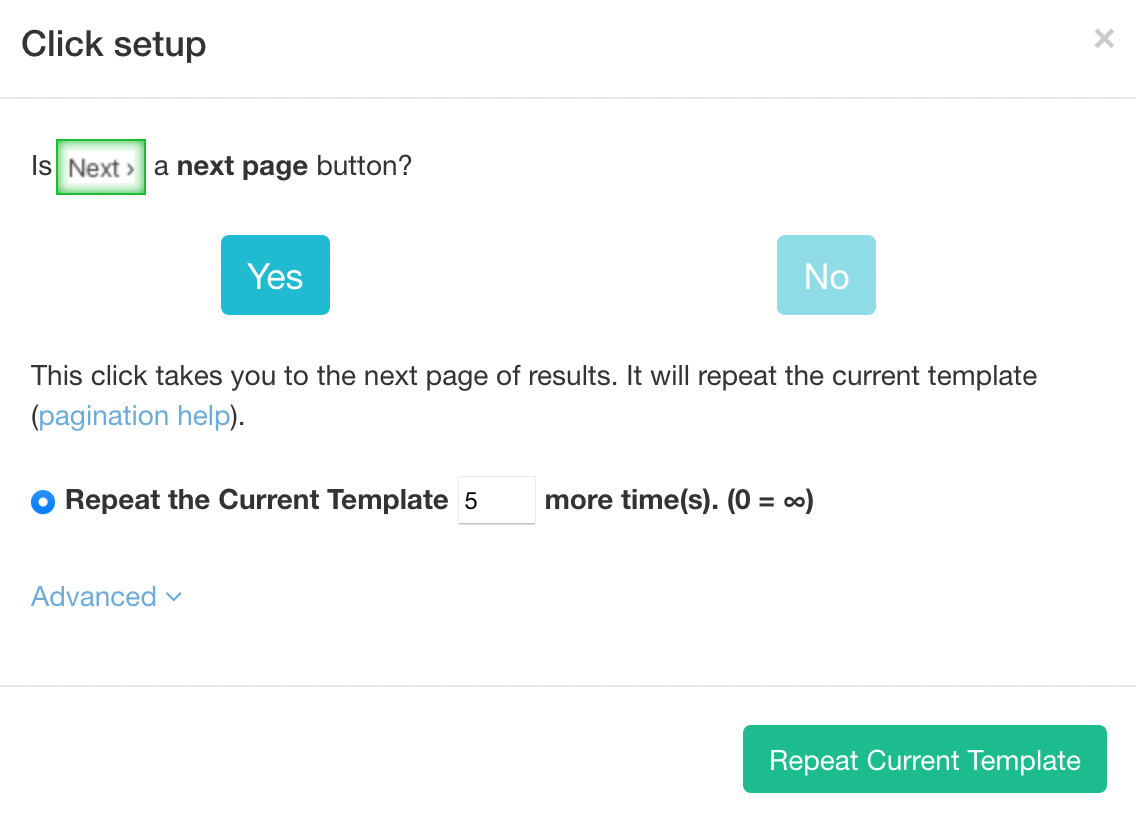

- Now, click on the PLUS(+) sign of your next selection and use the Click command.

- A pop-up will appear asking if this is a “Next” link. Click Yes and enter the number of pages you’d like to navigate to. In this case, we will scrape 5 additional pages.

Running and Scheduling your Scrape Job

Your scrape job is now complete. However, you might now want to extract the data right in the middle of the day.

You might be more interested in pulling data every so often to see the prices in coins. We will now ask ParseHub to run our scrape job daily at 9AM EST.

Pro-Tip: Project Scheduling is a paid ParseHub feature.

To do this, on the left sidebar click on “Get Data”. Then click on the Schedule button next to the Run button.

On this menu, enter the time schedule the time you’d like to run the scrape at and click on “Save and Schedule”.

Closing Thoughts

Exporting this kind of financial data on a schedule can be quite valuable.

But you might be interested in taking it to the next level.

For example, you can set up your scrape job to output to a Google Spreadsheet to create an online document with all the data you have extracted.

Related topics: